My CUDA program crashed during execution, before memory was flushed. As a result, device memory remained occupied.

I'm running on a GTX 580, for which nvidia-smi --gpu-reset is not supported.

Placing cudaDeviceReset() in the beginning of the program is only affecting the current context created by the process and doesn't flush the memory allocated before it.

I'm accessing a Fedora server with that GPU remotely, so physical reset is quite complicated.

So, the question is - Is there any way to flush the device memory in this situation?

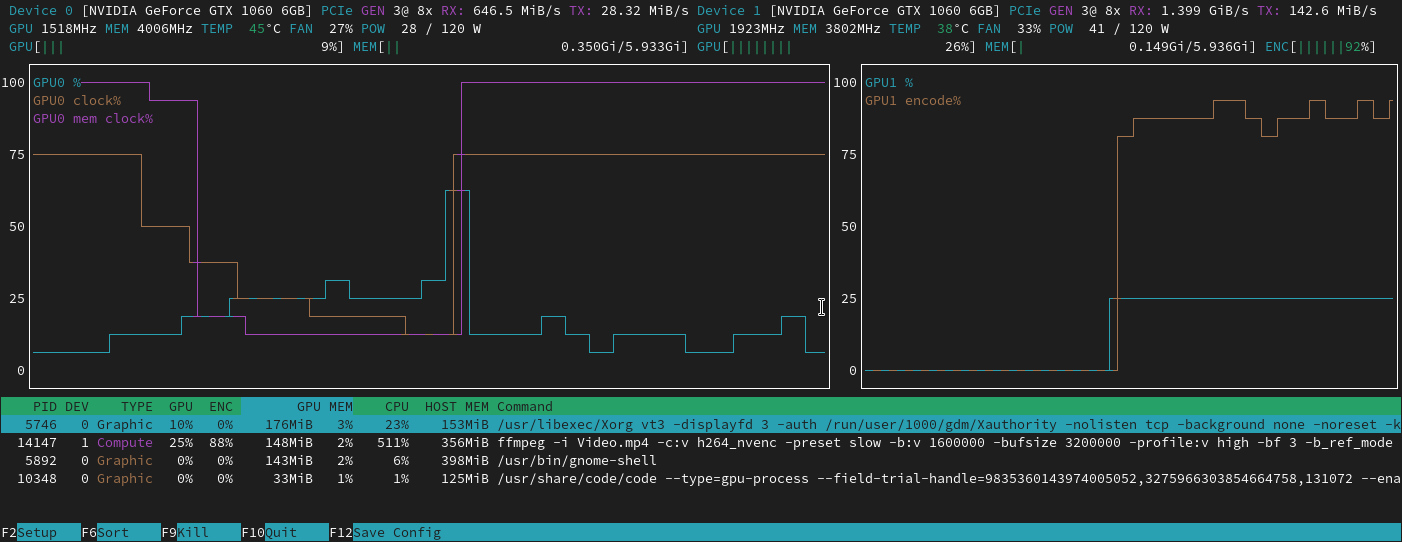

nvidia-smi --gpu-resetis not available, I can still get some information withnvidia-smi -q. In most fields it gives 'N/A', but some information is useful. Here is the relevant output:Memory Usage Total : 1535 MB Used : 1227 MB Free : 307 MB– timdimnvidiadriver. – teraps -ef |grep 'whoami'and the results show any processes that appear to be related to your crashed session, kill those. (the single quote ' should be replaced with backtick ` ) – Robert Crovellasudo rmmod nvidia? – Przemyslaw Zychnvidia-smi -caaworked great for me to release memory on all GPUs at once. – David Arenburg